Apple Confirms Siri Overhaul with Google's 1.2T Parameter Gemini Model, Launching March 2026

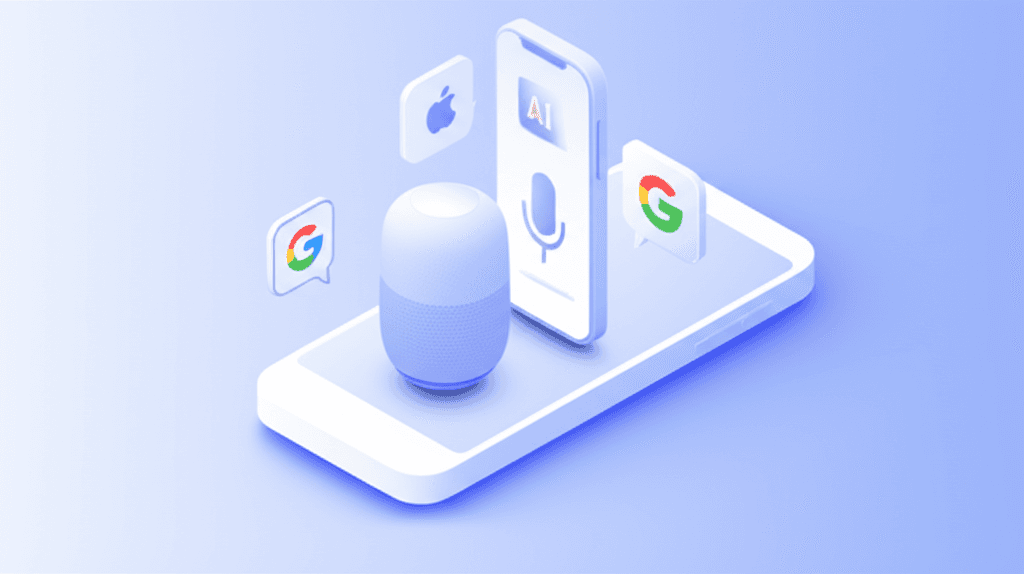

Apple is partnering with Google to integrate a 1.2 trillion parameter Gemini AI model into Siri, launching with iOS 26.4 in March 2026. This marks Apple's biggest AI pivot since the iPhone — and a strategic retreat from building everything in-house.

Apple just confirmed what insiders have been whispering about for months: Siri is getting a complete AI overhaul, and it's powered by Google.

The company announced it will integrate Google's 1.2 trillion parameter Gemini model into Siri, launching with iOS 26.4 in March 2026. This isn't a small feature update — it's Apple's biggest AI strategy shift since the original iPhone.

What's Actually Changing

Starting in March 2026, Siri will be powered by a hybrid system:

- On-device processing for simple commands and privacy-sensitive tasks (Apple's existing Neural Engine)

- Cloud-based Gemini for complex queries, multimodal understanding, and reasoning tasks

- Seamless fallback between the two, invisible to users

The 1.2 trillion parameter Gemini model is the same one Google uses for its most advanced AI features in Search and Workspace. It's significantly more capable than anything Apple has deployed at scale.

s.com/ai-agents-plus.firebasestorage.app/blog-images/apple-google-siri-gemini-overhaul-2026-inline.png)

For users, this means:

- Natural conversation — Siri will understand context across multiple turns

- Visual understanding — Point your camera at something and ask "What is this?" or "How do I fix this?"

- Cross-app workflows — "Find the email from John about the project and add it to my calendar"

- Real-time information — Grounded in Google Search, not static training data

- Multilingual — Better understanding of code-switching and non-English languages

Why Apple Is Making This Move

This partnership is a strategic admission: Apple fell behind in AI.

Since 2017, Siri has been the butt of jokes. While Google Assistant and Alexa improved incrementally, Siri stayed frustratingly limited. Apple's privacy-first approach and reluctance to use cloud processing became a competitive disadvantage.

Meanwhile:

- Google deployed Gemini across Search, Android, and Workspace

- OpenAI's ChatGPT went from zero to 200M weekly users in 18 months

- Microsoft integrated GPT-4 into Windows, Office, and Bing

- Meta released Llama models that startups used to build AI agents

Apple's internal AI efforts — codenamed "Apple GPT" and "Ajax" — weren't progressing fast enough to catch up. So Apple did something it almost never does: partnered with a direct competitor.

The Google Side: Why This Deal Makes Sense

For Google, this is a massive win:

Distribution at scale: Siri is used on 2+ billion active Apple devices. That's instant distribution for Gemini to hundreds of millions of users who would never download the Google app.

Data: Even with Apple's privacy controls, aggregate usage patterns from Siri queries will help Google improve Gemini. Apple isn't sharing personal data, but query types, failure modes, and edge cases are incredibly valuable.

Revenue: Apple is paying Google billions per year for this integration — similar to the $18-20B Google pays Apple for default search placement in Safari. This is a pure services revenue stream with minimal marginal cost.

Strategic positioning: If Apple had partnered with OpenAI instead, Google would have lost the mobile AI platform to Microsoft's ally. By securing the Apple deal, Google blocks OpenAI from the iOS ecosystem.

What About Privacy?

Apple is positioning this as "privacy-first AI", claiming:

- On-device processing for sensitive queries (contacts, photos, messages, health data)

- Private Cloud Compute for queries sent to Gemini — Apple claims Google won't see user identifiers or build profiles

- User control — Option to opt out of cloud processing entirely (though Siri will be less capable)

The technical implementation:

- Queries to Gemini are sent through Apple's servers, stripping identifiable information

- Google processes the query and returns results, but doesn't log the original request

- Apple stores encrypted query logs for debugging, deletable by users

Will this actually protect privacy? It's complicated.

Even anonymized query patterns reveal a lot. Google might not know you asked "What's the fastest route to Stanford Hospital?", but they'll know someone in Palo Alto asked that query at 3am. Aggregate enough of those signals, and you can infer patterns.

Apple is betting users will trust their privacy architecture. Google is betting the capability gains are worth the trade-off.

The Competitive Landscape

This deal reshapes the mobile AI market:

Winners:

- Google — Gemini becomes the default AI for iOS users, blocking OpenAI

- Apple — Catches up in AI without years of internal R&D

- Developers — SiriKit gets massive capability upgrades, enabling new app experiences

Losers:

- OpenAI — Lost the Apple partnership many expected, limiting ChatGPT's mobile distribution

- Amazon — Alexa falls further behind as Siri leaps ahead

- Samsung — Galaxy S26's multi-AI approach looks fragmented compared to Apple's integrated experience

What This Means For Your Business

If you're building iOS apps:

- Plan for Siri to become significantly more capable by Q2 2026

- Invest in SiriKit integrations — they'll actually work well now

- Consider voice-first experiences that weren't practical before

- Test extensively — the on-device vs cloud routing will have edge cases

If you're building AI products:

- Mobile distribution just got harder — Apple and Google locked down iOS

- Focus on differentiation, not general-purpose AI assistants

- Enterprise and desktop remain open battlegrounds

If you're an enterprise buyer:

- Siri may finally be usable for employee productivity in March 2026

- But evaluate data governance — where do your queries go?

- Don't assume privacy guarantees transfer to your specific use case

The March 2026 Timeline

Apple's launch timeline is aggressive:

- iOS 26.4 beta — Late February 2026 (likely available now to developers)

- Public release — Mid-March 2026

- Supported devices — iPhone 14 and newer, iPad Pro 2022+, Mac with M2+

- Phased rollout — US and UK first, expanding to other regions through Q2 2026

This is Apple's fastest major feature rollout in years. The company is clearly feeling pressure to ship AI capabilities before losing more market share to Android's AI features.

Looking Ahead

Watch for:

- Developer adoption — Will app makers rebuild experiences around the new Siri?

- Privacy audits — Security researchers will test the anonymization claims

- Competitive response — How will Samsung and other Android OEMs respond?

- User reception — Will Siri finally stop being a punchline?

- Google's leverage — As the AI provider, Google gains significant influence over Apple's roadmap

The biggest question: Is this partnership temporary or permanent?

Apple is likely still investing in internal AI capabilities. This could be a stopgap while Apple builds its own competitive model. Or it could be a long-term strategic partnership, with Google becoming Apple's AI infrastructure provider the same way it's been the default search provider for 15+ years.

Either way, March 2026 marks the end of old Siri. What comes next will define whether Apple can compete in the AI era — or whether it's permanently dependent on Google's AI infrastructure.

Build AI That Works For Your Business

At AI Agents Plus, we help companies move from AI experiments to production systems that deliver real ROI. Whether you need:

- Custom AI Agents — Autonomous systems that handle complex workflows, from customer service to operations

- Rapid AI Prototyping — Go from idea to working demo in days using vibe coding and modern AI frameworks

- Voice AI Solutions — Natural conversational interfaces for your products and services

We've built AI systems for startups and enterprises across Africa and beyond.

Ready to explore what AI can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.