Anthropic Quietly Rolls Back AI Safety Commitments to Stay Competitive

Anthropic, the AI safety company founded by ex-OpenAI researchers who left over safety concerns, just amended its core safety principles. The move signals that even the most safety-focused AI labs are bending under competitive pressure.

Anthropic amended its self-imposed AI safety guidelines on February 27, 2026, scaling back commitments the company established when it was founded in 2021. According to CBC News, the changes are aimed at staying competitive as the AI race accelerates.

This is the company that was founded specifically because its leaders — former OpenAI researchers including Dario and Daniela Amodei — believed OpenAI was moving too fast and taking too many risks. Now Anthropic is relaxing the same safety guardrails it championed.

The irony is brutal, and the implications run deep.

What Changed

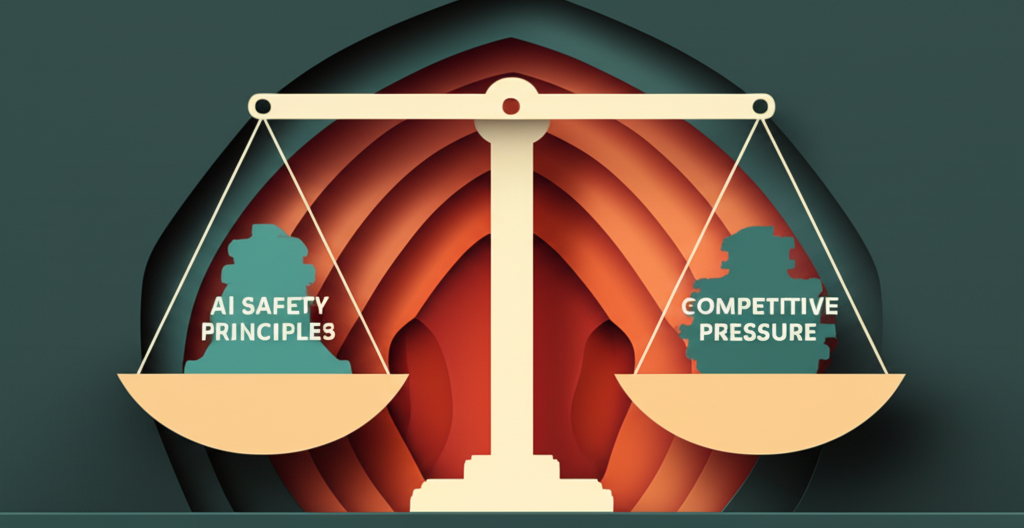

Anthropic hasn't released the full details publicly, but the amendments reportedly weaken provisions designed to prevent dangerous AI development. The original framework included commitments to:

- Extensive red-teaming before major releases

- Conservative capability thresholds for public deployment

- Transparency about model limitations and risks

- Slow, deliberate scaling tied to safety research progress

The new version appears to give Anthropic more discretion to accelerate deployment timelines and relax some testing requirements.

The Competitive Reality

Here's what's driving this: OpenAI, Google, and Meta are shipping fast. OpenAI's Operator agent launched in January. Google's Gemini 1.2T parameter model is powering Apple's Siri overhaul. Meta's Llama 4 is rumored for Q2 2026.

Anthropic's Claude is technically excellent — many developers prefer it for code generation and complex reasoning — but the perception gap is growing. OpenAI owns mindshare. Google has distribution. Meta has open-source momentum.

If Anthropic stays cautious while competitors race ahead, they risk irrelevance. Investors notice. Enterprise customers notice. Developers building on the platform notice.

The safety-first positioning that defined Anthropic's brand is now a competitive liability.

The Slippery Slope

This is exactly the dynamic AI safety researchers warned about: competitive pressure forcing labs to compromise on safety to keep up.

Anthropic was supposed to be the counterexample — the company that proved you could build frontier AI responsibly and still win commercially. If even they're rolling back commitments, it suggests the market won't tolerate safety-conscious slowness.

The problem compounds. When Anthropic loosens safety standards, it gives OpenAI and Google permission to do the same. "Even Anthropic is moving faster" becomes justification for everyone else to accelerate.

No single company can afford to be significantly more cautious than competitors when the commercial stakes are this high.

What Anthropic Says

Anth ropic hasn't issued a detailed public statement, but the message is clear: they believe the original framework was too restrictive given current understanding of AI risks and competitive dynamics.

The company likely argues that:

- Safety research has advanced, allowing safer rapid deployment

- Overly conservative deployment slows beneficial AI adoption

- Real-world deployment data is necessary for safety improvements

- Market position affects ability to invest in safety research long-term

These aren't unreasonable points. Safety isn't binary. The original commitments were written in 2021 when GPT-3 was state-of-the-art. The landscape has changed.

But the optics are terrible. The company founded on AI safety principles is now publicly weakening those principles under competitive pressure.

The Enterprise Angle

For enterprise customers evaluating Claude vs GPT vs Gemini, this changes the calculation.

Anth ropic's safety positioning was a selling point — especially for regulated industries like finance and healthcare that need defensible AI deployment policies. "We chose Claude because Anthropic prioritizes safety" was a line CIOs could take to the board.

If Anthropic is now optimizing for speed over safety, that advantage diminishes. The differentiation becomes purely technical — model performance, API reliability, pricing — where OpenAI and Google have advantages.

What This Means For Your Business

If you're building on AI platforms or evaluating AI strategy:

-

Vendor safety claims need skepticism: Anthropic's rollback proves that safety commitments are negotiable when commercial pressure hits. Don't assume any vendor will maintain conservative positions if it costs them market share.

-

Build your own safety framework: Relying on AI vendors to be cautious is no longer a viable strategy. If you need safety guarantees, build them into your own deployment processes — red-teaming, monitoring, human oversight.

-

The race is accelerating: This signals that all major labs will move faster in 2026. Expect more capable models, more aggressive deployment, and more corner-cutting on safety testing. Plan accordingly.

Looking Ahead

The fundamental question is whether AI safety and competitive success are compatible.

Anth ropic's bet was yes — that being the responsible AI lab would be a competitive advantage. This rollback suggests they no longer believe that, at least not in the short term.

The alternative interpretation: Anthropic still cares about safety but has concluded that market position is necessary to fund safety research long-term. Losing to OpenAI means losing influence over how AI develops.

Either way, the result is the same: safety concerns are subordinate to competitive dynamics. That's the uncomfortable truth this rollback reveals.

Watch what Anthropic ships next. If Claude 4 launches with aggressive capabilities and short testing cycles, we'll know the new policy isn't just words.

Build AI Systems You Can Trust

At AI Agents Plus, we help companies deploy AI responsibly — building systems with proper oversight, testing, and safety mechanisms.

Whether you need:

- Custom AI Agents — Autonomous systems with built-in safety guardrails and human oversight

- Rapid AI Prototyping — Fast iteration with proper testing and validation frameworks

- Voice AI Solutions — Conversational interfaces designed with user safety and privacy in mind

We've built production AI for startups and enterprises across Africa and globally.

Ready to build AI the right way? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.