Pentagon May Label Anthropic a Supply Chain Risk — What It Means for AI

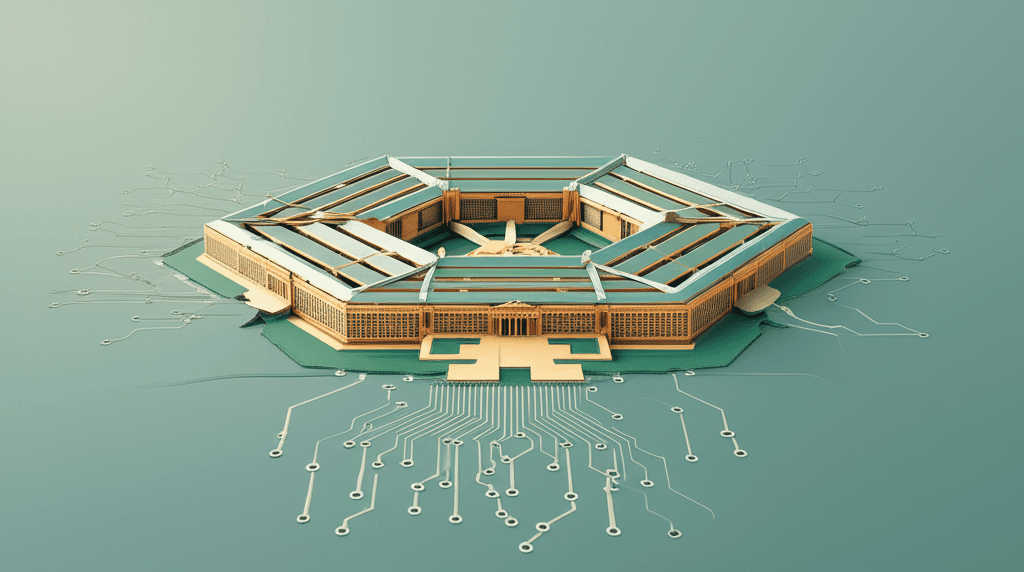

The Department of Defense is reportedly considering designating Anthropic as a supply chain risk, which would force defense contractors to cut ties with the Claude maker. This signals a growing rift between AI safety advocates and military AI adoption.

The Department of Defense is preparing to designate Anthropic — maker of the Claude AI assistant — as a "supply chain risk," according to Axios. If finalized, this move would effectively ban any company doing business with the U.S. military from working with Anthropic.

This is a significant escalation in the ongoing tension between AI companies that prioritize safety constraints and government agencies seeking unrestricted AI capabilities.

What Happened

According to reports, the DoD and Anthropic have been negotiating for months over how the military can use Anthropic's AI tools. The sticking point appears to be Anthropic's safety-first approach, which includes usage restrictions that conflict with certain military applications.

Should Anthropic receive the designation, defense contractors and any company in the military supply chain would be forced to sever their relationship with the AI company.

Why This Matters

This isn't just about one AI company. It's a signal of how governments are starting to view AI safety restrictions — not as responsible development practices, but as potential obstacles to national security.

Anthropically has built its brand on being the "responsible AI" company. Claude famously refuses certain requests that other AI systems might fulfill. This principled stance has won them enterprise customers who value predictability and safety.

But that same stance now appears to be creating friction with the world's largest military.

The Technical Angle

Anthropic's Constitutional AI approach means Claude is trained with specific principles that limit certain behaviors. These aren't just surface-level filters — they're baked into the model's training.

For military applications, this could mean Claude refusing to:

- Generate certain tactical content

- Assist with weapons-adjacent tasks

- Operate without transparency about its limitations

These constraints are features for commercial customers. For military operations, they may be seen as bugs.

What This Means For Your Business

If you're building AI products or evaluating AI vendors, this development deserves attention:

-

If you're in the defense supply chain: Start assessing your AI vendor relationships now. A designation like this could force rapid changes to your tech stack.

-

If you're using Claude for enterprise: Anthropic's commercial business isn't directly affected, but watch for spillover effects on funding and development priorities.

-

If you're evaluating AI safety vs. capability tradeoffs: This is a concrete example of how safety constraints can have real business consequences. Your own AI policies may face similar scrutiny.

Looking Ahead

This situation highlights an emerging fault line in the AI industry: the tension between safety-constrained AI and unrestricted capability. Companies will increasingly need to pick sides — or find ways to serve both markets with different products.

For Anthropic, the irony is sharp. Just weeks ago, they ran a Super Bowl ad emphasizing that Claude won't do certain things. Now that restraint may cost them access to one of the world's largest AI budgets.

We'll be watching how this plays out. It could reshape how AI companies think about government contracts — and whether safety-first positioning is sustainable in a world where governments want AI without guardrails.

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.