Google Launches Gemma 4: Open-Weight Models Challenge Closed AI

Google DeepMind releases Gemma 4, a family of four open-weight AI models under Apache 2.0 license. With 400M+ downloads and a focus on agentic workflows, the move signals a major bet on democratized AI infrastructure.

Google DeepMind just dropped Gemma 4, and it's not just another model release. It's a statement about where AI is headed—and who gets to build it.

The company released four open-weight models under the Apache 2.0 license, designed specifically for agentic workflows and on-device deployment. That licensing choice matters: Apache 2.0 means enterprises can actually use these models in production without negotiating custom agreements or worrying about restrictive terms.

What's In the Box

Gemma 4 comes as a family of four models, each optimized for different deployment scenarios. Google built them to run everywhere from edge devices to workstations, prioritizing local deployment over cloud dependence.

The focus on agentic workflows is deliberate. These aren't just better chatbots—they're designed for autonomous systems that need to perceive their environment, reason through complex decisions, and take action without constant human oversight.

Google says the Gemma series has seen over 400 million downloads since the original release in February 2024. That adoption rate tells you something about market appetite for alternatives to closed models.

Why Open-Weight Matters Now

The timing here is strategic. While OpenAI, Anthropic, and others double down on increasingly closed frontier models, Google is making a different bet: that the real value in AI infrastructure comes from adoption and ecosystem, not from keeping weights behind API walls.

Open-weight models change the economics of enterprise AI deployment. You're not paying per token. You're not subject to rate limits. You can fine-tune on proprietary data without sending it to a third party. For regulated industries or companies with strict data sovereignty requirements, that's not a nice-to-have—it's a dealbreaker for adoption.

The Apache 2.0 license is particularly significant. It's more permissive than many "open" AI licenses that restrict commercial use or require derivative works to be open-sourced. Enterprises can build commercial products, keep their modifications private, and deploy without legal ambiguity.

The Agentic AI Play

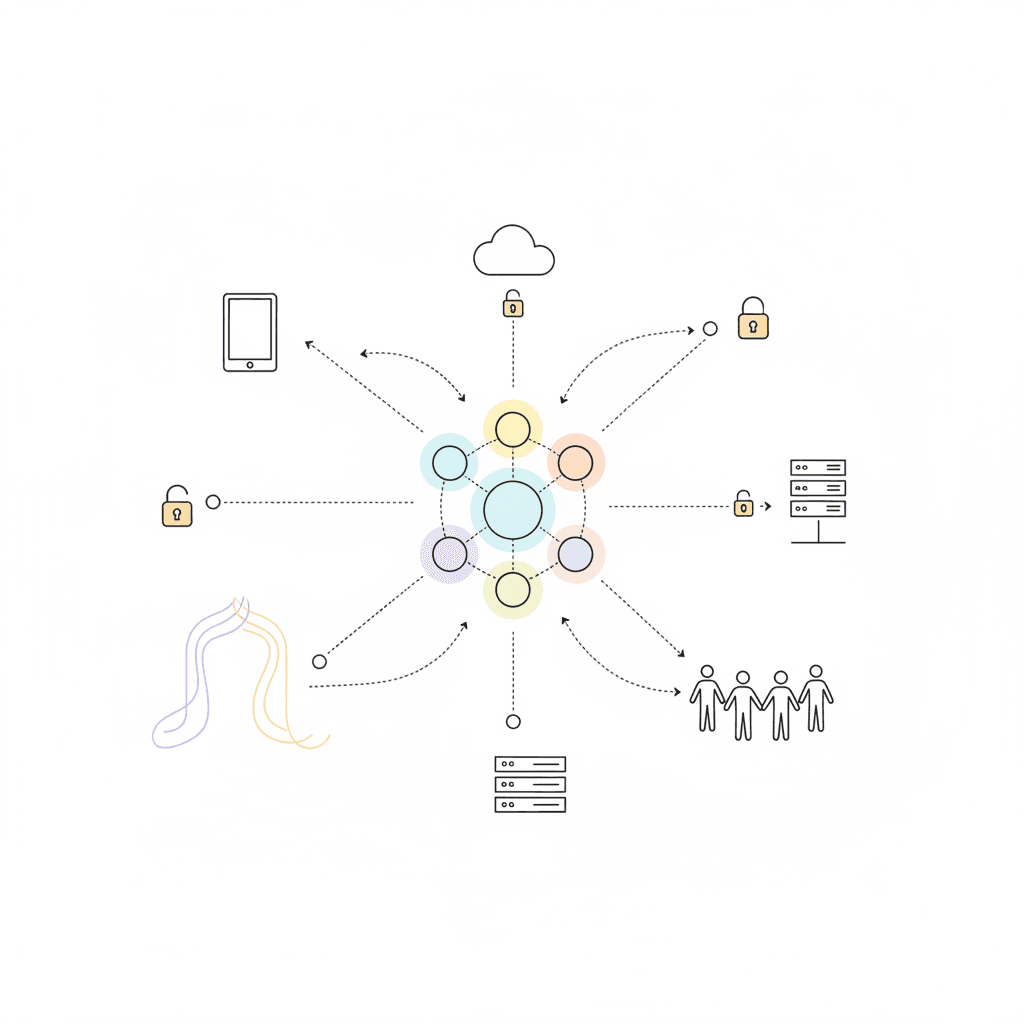

Google specifically designed Gemma 4 for agentic workflows. That means systems that can:

- Perceive: Process multimodal inputs from their environment

- Reason: Make decisions based on complex, changing conditions

- Act: Execute tasks autonomously without constant human intervention

This isn't theoretical. Companies are already building AI agents that handle customer service escalations, manage inventory across supply chains, and automate code review processes. These systems need models that can run locally, make reliable decisions, and integrate into existing infrastructure.

AI agent deployment strategies matter more when you're running the models yourself rather than calling an API.

On-Device vs. Cloud: A Fundamental Shift

Gemma 4's ability to run on devices from edge endpoints to workstations challenges the cloud-first assumption that's dominated AI deployment.

Running models locally means:

- Lower latency: No round-trip to the cloud

- Better privacy: Sensitive data never leaves your infrastructure

- Cost predictability: You're not paying per inference

- Offline capability: Systems work without internet connectivity

For manufacturing, healthcare, or financial services, these aren't technical preferences—they're operational requirements.

What This Means For Your Business

If you're building or buying AI systems, Gemma 4 represents a real alternative to the closed-model default.

If you're building AI products, consider whether open-weight models could reduce your infrastructure costs while giving you more control over performance and deployment. The ability to fine-tune on your own data without sending it to a third party changes what's possible in specialized domains.

If you're buying AI solutions, ask vendors whether they're locked into specific model providers. Solutions built on open-weight models give you more flexibility and reduce vendor lock-in risk.

If you're evaluating AI strategy, think about the total cost of ownership beyond API pricing. Per-token costs add up fast at scale. Running your own models requires different infrastructure, but the economics can be dramatically better once you're past the experimentation phase.

The AI agent security landscape also shifts when you control the model weights. You can implement custom security controls, audit model behavior more thoroughly, and ensure compliance with internal policies.

The Competitive Landscape

Google isn't alone in the open-weight space. Meta's Llama series, Mistral AI's models, and newer entrants like DeepSeek are all competing for developer mindshare.

But Google brings unique strengths: deep research capabilities, extensive cloud infrastructure (for those who want a hybrid approach), and integration with the broader Google Cloud ecosystem. The 400 million downloads suggest they're winning the distribution game.

The real competition isn't between open-weight models—it's between the open-weight and closed-weight approaches to AI infrastructure. Google is betting that openness wins in the long run, at least for a significant segment of enterprise use cases.

Looking Ahead

Watch for how enterprises actually deploy Gemma 4. The gap between "available" and "production-ready" can be substantial. Questions to track:

- How do fine-tuning costs compare to API costs at scale?

- What infrastructure do companies need to run these models reliably?

- Which industries adopt open-weight models first, and why?

- How do governance and compliance requirements affect the choice between open and closed models?

Google made a big move here. Whether it reshapes enterprise AI deployment depends on what companies actually build with it.

Build AI Agents That Work For Your Business

At AI Agents Plus, we help companies deploy autonomous AI systems that deliver real ROI—whether that's using cutting-edge open-weight models, closed APIs, or hybrid approaches.

We specialize in:

- Custom AI Agents — Autonomous systems for customer service, operations, and complex workflows

- Rapid AI Prototyping — Go from concept to working demo in days, not months

- Voice AI Solutions — Natural conversational interfaces that your customers actually want to use

Based in Nairobi and serving clients globally, we bring deep technical expertise and a practical, no-hype approach to AI implementation.

Ready to explore what AI agents can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.