LLM Agent Telemetry Signals and Monitoring Best Practices

Learn essential LLM agent telemetry signals and monitoring best practices for production AI systems. Track performance metrics, detect anomalies, and optimize behavior through comprehensive observability.

LLM Agent Telemetry Signals and Monitoring Best Practices

Effective monitoring of LLM agent telemetry signals is critical for maintaining reliable AI systems in production. As organizations deploy increasingly sophisticated AI agents, understanding how to track performance metrics, detect anomalies, and optimize behavior through comprehensive telemetry has become a fundamental requirement for successful AI operations.

What Are LLM Agent Telemetry Signals?

LLM agent telemetry signals are measurable data points that provide visibility into how your AI agents are performing in real-world environments. These signals encompass everything from token usage and response latencies to conversation quality scores and error rates. Unlike traditional application monitoring, LLM agent telemetry must account for the non-deterministic nature of large language models while still providing actionable insights.

Key telemetry categories include:

- Performance Metrics: Response time, token consumption, throughput

- Quality Signals: Hallucination rates, semantic accuracy, task completion

- Cost Tracking: API usage, compute resources, per-interaction costs

- User Experience: Conversation abandonment, retry rates, satisfaction scores

- System Health: Error rates, timeout frequencies, fallback triggers

Why LLM Agent Telemetry Matters

Without proper monitoring, AI agents become black boxes that fail unpredictably. Telemetry transforms opaque AI systems into observable, debuggable, and continuously improving services. Organizations that implement comprehensive monitoring see 40-60% faster incident resolution and significantly lower operational costs.

Production AI agents face unique challenges: prompt injection attempts, context window exhaustion, API rate limiting, and subtle quality degradation over time. Telemetry signals provide early warning systems that catch these issues before they impact end users. Learn more about AI agent security best practices to complement your monitoring strategy.

Essential Telemetry Signals to Track

1. Token Usage and Cost Metrics

Track tokens per request, cumulative daily usage, and cost per conversation. Set alerts when usage patterns deviate from baselines — sudden spikes often indicate prompt engineering issues or unexpected user behavior.

- Prompt tokens vs completion tokens ratio

- Average tokens per successful interaction

- Cost per user session

- Daily/weekly token burn rate

2. Response Quality Indicators

Implement automated quality checks using semantic similarity scores, response coherence metrics, and task-specific success criteria. For customer service agents, track resolution rates; for coding assistants, monitor syntax validity.

3. Latency and Performance

Monitor end-to-end response times, breaking down into API latency, processing time, and network overhead. P50, P95, and P99 percentiles reveal user experience better than averages. Target sub-3-second response times for conversational agents.

4. Error and Fallback Rates

Track API errors, timeout frequencies, rate limit hits, and fallback mechanism triggers. High fallback rates indicate your primary model is struggling with current workloads. Understanding AI agent context window management techniques can help reduce context-related errors.

5. Conversation Flow Metrics

Measure turns per conversation, abandonment rates by step, and user retry patterns. These signals reveal friction points in your agent's conversational design.

LLM Agent Telemetry Best Practices

Implement Multi-Layer Observability

Combine real-time monitoring dashboards with historical trend analysis and automated anomaly detection. Use tools like LangSmith, Weights & Biases, or custom Prometheus/Grafana stacks.

Real-time layer: Alert on immediate failures (API errors, timeouts) Trending layer: Identify gradual quality degradation Analysis layer: Root cause investigation with full conversation traces

Establish Baseline Metrics

New deployments lack context. Run controlled load tests and shadow deployments to establish normal operating ranges before going live. Track week-over-week trends to catch seasonal variations.

Use Structured Logging

Log every interaction with consistent schema: user_id, session_id, timestamp, prompt_tokens, completion_tokens, model_version, success_status, and error_codes. Structured logs enable powerful querying and ML-based anomaly detection.

Monitor Model Drift

LLM providers update models regularly. Track quality metrics across model versions to detect when updates degrade performance for your use case. Pin specific model versions in production until you've validated changes.

Implement Cost Controls

Set spending alerts at multiple thresholds. Monitor cost-per-user and identify outlier sessions that consume excessive tokens. For high-volume deployments, consider AI agent frameworks that offer built-in cost optimization.

Create Actionable Alerts

Alert fatigue kills monitoring programs. Focus on high-signal indicators:

- Error rate exceeds 5% over 10 minutes

- P95 latency crosses 5 seconds

- Hourly token consumption exceeds budget by 50%

- Hallucination detection score above threshold

Advanced Monitoring Techniques

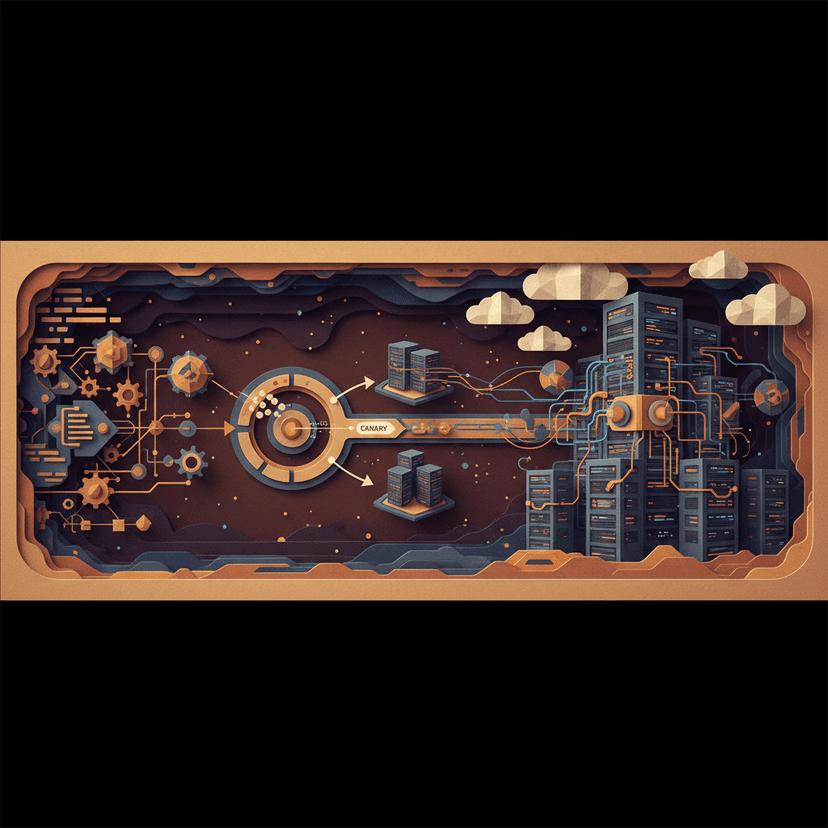

Canary Deployments: Route 5-10% of traffic to new model versions while comparing telemetry between cohorts.

Synthetic Monitoring: Run automated test conversations hourly to catch regressions before real users encounter them.

User Feedback Integration: Capture thumbs up/down ratings and correlate with technical metrics to identify quality-performance trade-offs.

Prompt Performance Tracking: Version your prompts and track success metrics per variant. A/B test prompt changes using telemetry to guide optimization.

Common Monitoring Mistakes to Avoid

Over-relying on averages: Medians and percentiles reveal user experience better than means.

Ignoring context length growth: Sessions that approach token limits behave unpredictably. Monitor conversation length distributions.

Missing cold-start metrics: First interactions often have different performance characteristics than subsequent turns.

Neglecting silent failures: Agents that produce plausible but incorrect answers won't trigger error alerts. Implement quality scoring alongside error tracking.

Forgetting about costs: Token usage can spiral quickly. Daily cost monitoring should be non-negotiable.

Conclusion

LLM agent telemetry signals and monitoring best practices form the foundation of reliable AI systems. As agents become more autonomous and handle higher-stakes tasks, comprehensive observability transitions from nice-to-have to mission-critical infrastructure.

Organizations that invest in robust monitoring see faster development cycles, lower operational costs, and significantly better user experiences. Start with core metrics — latency, errors, and token usage — then expand to quality signals and conversation analytics as your system matures.

Build AI That Works For Your Business

At AI Agents Plus, we help companies move from AI experiments to production systems that deliver real ROI. Whether you need:

- Custom AI Agents — Autonomous systems that handle complex workflows, from customer service to operations

- Rapid AI Prototyping — Go from idea to working demo in days using vibe coding and modern AI frameworks

- Voice AI Solutions — Natural conversational interfaces for your products and services

We've built AI systems for startups and enterprises across Africa and beyond.

Ready to explore what AI can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.